If you do research in the field of learning science there is almost certainly one point in which you realise how difficult is to model and describe a learning process. Among the human cognitive processes, learning is definitely one of the most complex and fascinating, as it encompasses several dimensions including as motivation, knowledge, emotional states.

This incompleteness is even more evident when trying to trace learning using data-driven approaches, such as sensors and analytics. There are so many uncontrolled variables that influence negatively and positively the learning process which is very likely to fall into the trap of the street-light effect.

In one of my previous writing, to describe this phenomenon, I used the metaphor of the iceberg, the visible part constitutes the multimodal data of what sensors data can capture or software logs can capture such as the learning behaviour and the learning context. The part underwater constitutes the unobservable dimensions that constitute learning. There is, however, a big philosophical debate around these invisible forces that facilitate (or hinder) learning. But in any case, the certainty remains that learning is an extremely uncontrolled process which is difficult to measure.

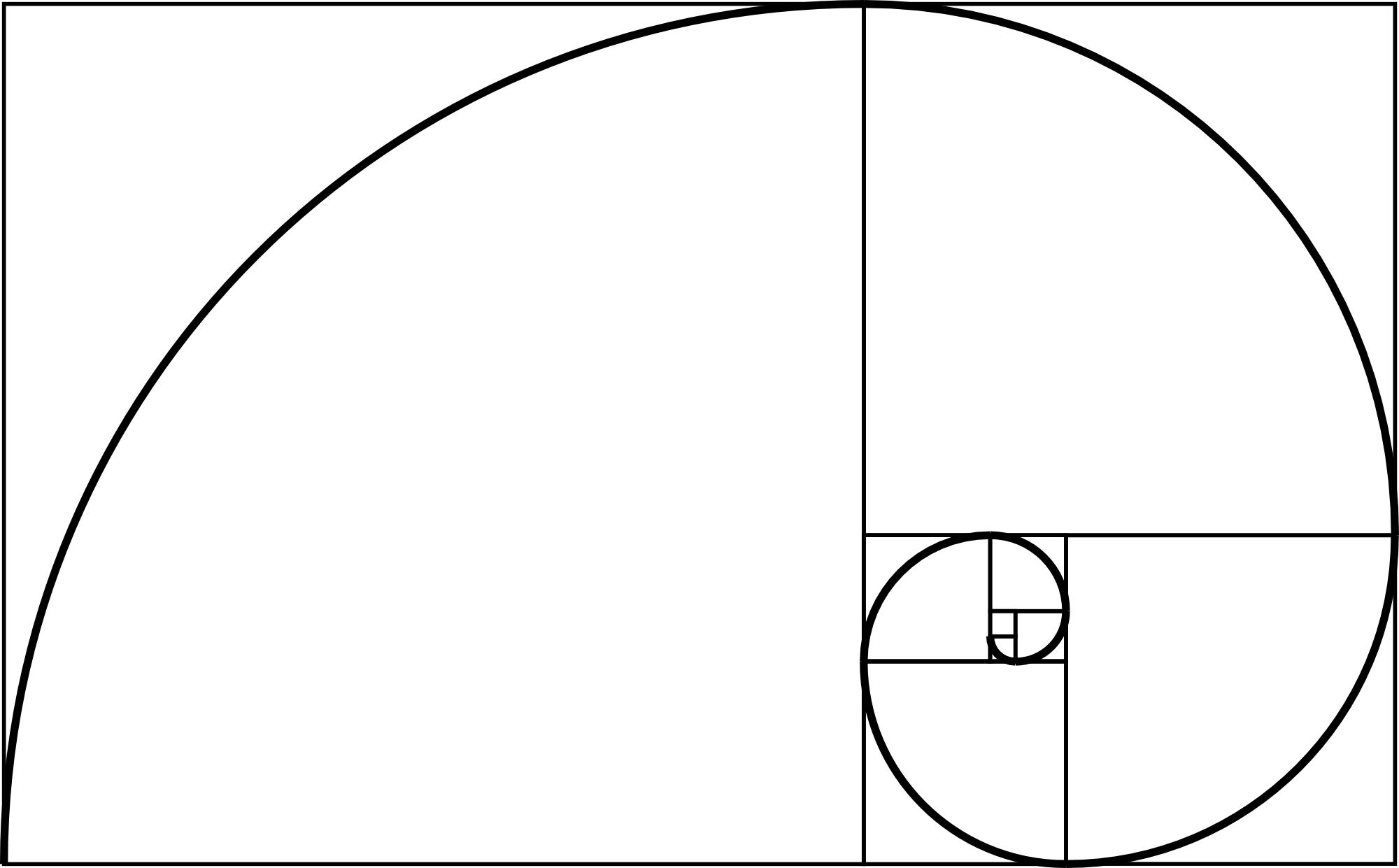

Sadly acknowledgement this aspect, I started thinking we should stop guessing about how learning happens, and instead make things simple and better controlled. Instead of guessing what is at the very bottom of the iceberg, we first need to make sense of what is directly under-water. I call this part symbolically the golden mean: the middle layer which sensors and analytics cannot grasp automatically.

This corresponds to inter-action recognition, which corresponds to identifying interactions with things or people such as for instance "flipping a page", "chatting with a peer", "watching a video". Similarly, they can be motoric actions, not addressed to any person or object such as "standing up", "raising voice", "walking out" and so on. All these inter-actions, are probably not very relevant to the attainment of certain learning outcomes or the achievement of learning goals. However, inter-action recognition is a layer of abstraction which is most of the time not implemented into sensors suitable for learning. Actually, to successfully teach a computer to this level of inference and reasoning is already quite a complex challenge requiring: signal processing, machine learning, predictive analytics and so on.

After proper recognition of the inter-actions during the learning process, it is time to process their semantic, i.e. going deeper into the actual contribution of every single action in relation to the learning goals.